CMUCAM to analyse and track colors with your mobile autonomous robots - NXTCAM

When starting out with programmable personal robotics, a camera is not generally the first type of sensor you test – because of its cost and its complexity apart from anything else. However, it is quite common to rapidly move on to visual data, as vision plays an important role in our perception as humans of the world around us.

The famous Carnegie Mellon University is well aware of this and has been offering a generic embedded camera for small robots named CMUcam for several years now. This model is implemented by different electronics manufacturers on the basis of the specifications issued by the university. We offer two CMUcam possibilities on this site:

- Mindsensors NXTCAM for the Lego Mindstorms NXT kit

- The Pixy CMUCAM5 camera

The CMUcam is not exactly an embedded camera, like those found on a PC or mobile phone. It is both a bit more than that and a bit less.

CMUcam: First and foremost a vision tool

The CMUcam is a system comprising an integrated camera and microprocessor. It is a low-end artificial vision device and has a serial port to interface with the PC or robot. The CMUcam offers basic image analysis functions embedded in its microprocessor.

This system was specifically created for personal robotics in either a leisure or teaching environment, and designed to be both simple to use and cost-conscious. The CMUcam is intentionally a low-capacity device, as it is intended to be integrated into small robots in which power, processing capacity and memory are limited.

Three generations of camera are on offer today. The specifications for these three generations can be found on the following page of the Carnegie Mellon University website: http://www.cs.cmu.edu/~cmucam

How do you use a CMUcam and what is it for?

The main purpose of the CMUcam is to detect colours that have been pre-configured by the user. These colours are automatically detected by the integrated processor, which then provides the robot with raw data on the number of areas (known as “blobs”) as well as the coordinates of these areas and the colour detected.

You can then use this information to program your robot to react to these blobs of colour in order to follow an object, a line, etc.

NXTCam

Introduction

|

In the rest of this article, we will be using a specific example to illustrate our presentation. We have decided to use Mindsensors NXTCam, which is available on this site, so the description below is specific to this camera. However, most of the concepts are identical for other CMUcam operations. |

CMUcam operations always involve two stages:

- Colour map settings configuration

- Use on the robot (tracking)

Features

The technical features of the NXTCam are as follows:

- Field of vision: approximately 43°

- Capacity of the NXTCam: approximately 30 frames per second

- USB and RJ12 connection for the PC and the robot respectively

- Energy consumption: 42 mA (maximum) and 4.7 V

The camera returns the following information in real time: the number of objects (8 maximum), the colour of objects, and the coordinates of objects in the form of boxes or lines. As is the case with all NXT sensors, all of these operations take place in real time.

Installation

To install the NXTCam on your PC, you must first install the USB drivers for the NXTCam. The PC and MAC drivers can be obtained at http://www.mindsensors.com/index.php?module=documents&JAS_DocumentManager_op=viewDocument&JAS_Document_id=44

To install on a PC equipped with Windows XP, download the corresponding zip file and unzip the file. Then connect the NXTCam to your PC using a USB cable. Your PC will detect the new device. In the window that opens, specify the location of the directory that you have just unzipped. This operation must be repeated a second time (because two drivers are installed). Consult the Mindsensors website to install on a PC running under Windows Vista or on a Mac (http://www.mindsensors.com).

The CMUcam specifications describe a connection using the serial port. In the proposed implementation, Mindsensors had the idea of using a USB connection. This is why you need to install a second driver, as it is the USB serial connector.

Connecting the CMUcam to the PC

Before using the CMUcam on your robot, you must first configure the settings on the CMUcam for the colours you wish to track. To do this, you must download the Open source NXTCamView tool, which can be obtained at http://nxtcamview.sourceforge.net

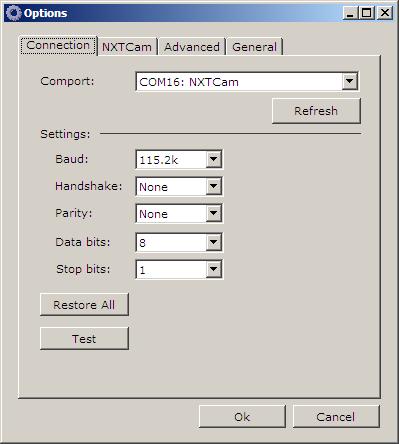

This is the NXTCamView “Options” window. This window allows you to define the connection configurations for the NXTCam. At this stage, we will concentrate on the connection with the NXTCam. In the drop-down list, choose the COM port associated with the NXTCam. Press on the “Test” button. If the connection is valid, the phrase “Success: NXTCam responded” will appear in green under the test button. If not, check that the USB cable is correctly connected to the NXTCam and to your PC. Then click on "OK".

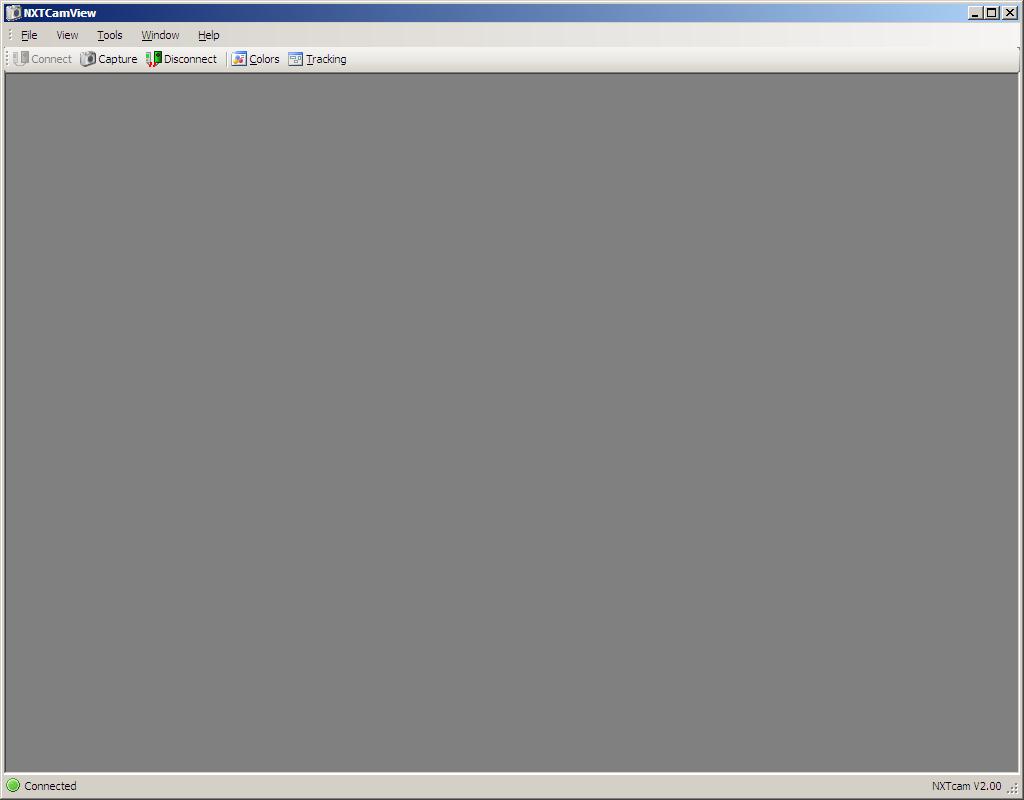

The NXTCamView work area will open. Press the “Connect” button. The red disk in the bottom left of the screen will turn green:

That’s it! You are now ready to actually start using the NXTCam. The next stage is to configure the NXTCam to tell it what colours you wish to track.

Colour map settings configuration

The first step involves capturing images and using them to establish the colours you wish to track. To do this, you must determine the minimum and maximum intensity values for each of the three colours (red, green and blue). The values you determine define what is known as the colour map.

The difficulty lies in the fact that the colour of an object is not usually even, if only because of the location of the camera and the lighting configuration of the area. The quality of the image captured by the NXTCam is not very good, and the image is rather blurred. This is due to the fact that the camera resolution is not very powerful. You can try to adjust the focus manually (by turning the camera focus) or using the settings in NXTCamView (AutoWhiteBalance and AutoAdjust settings).

Let’s now get back to our subject. We need to configure the colours to be tracked. You need to continue as follows:

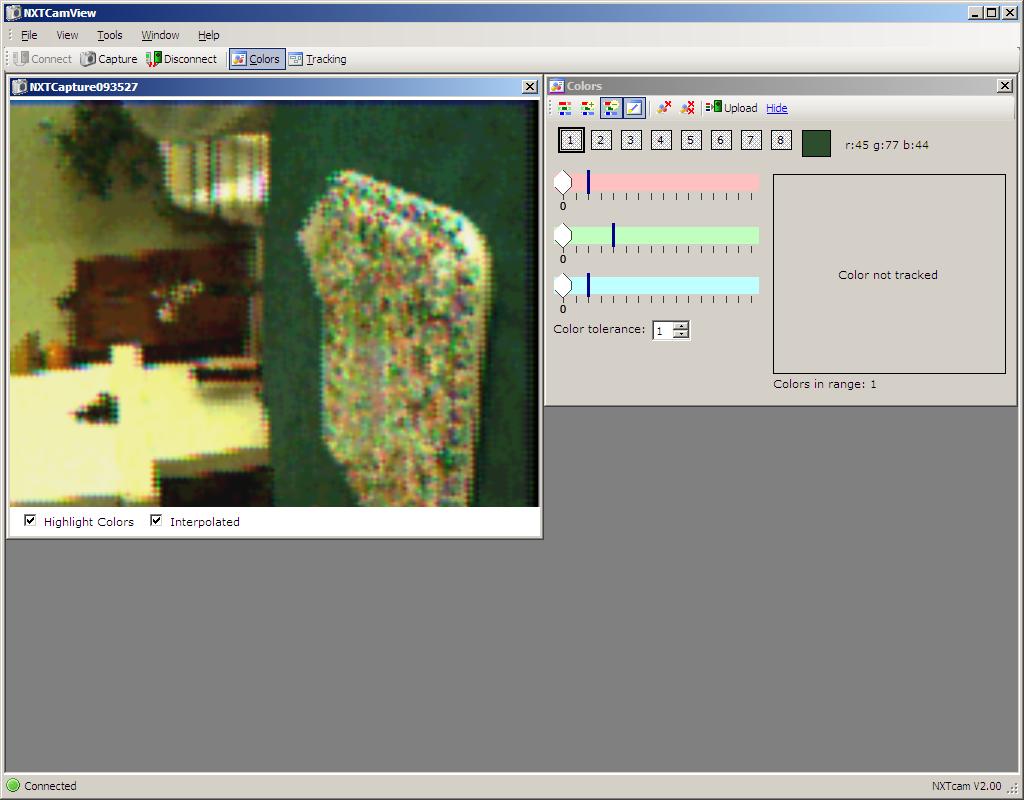

- Capture an image, i.e. take a photo using the “Capture” button in NXTCamView.

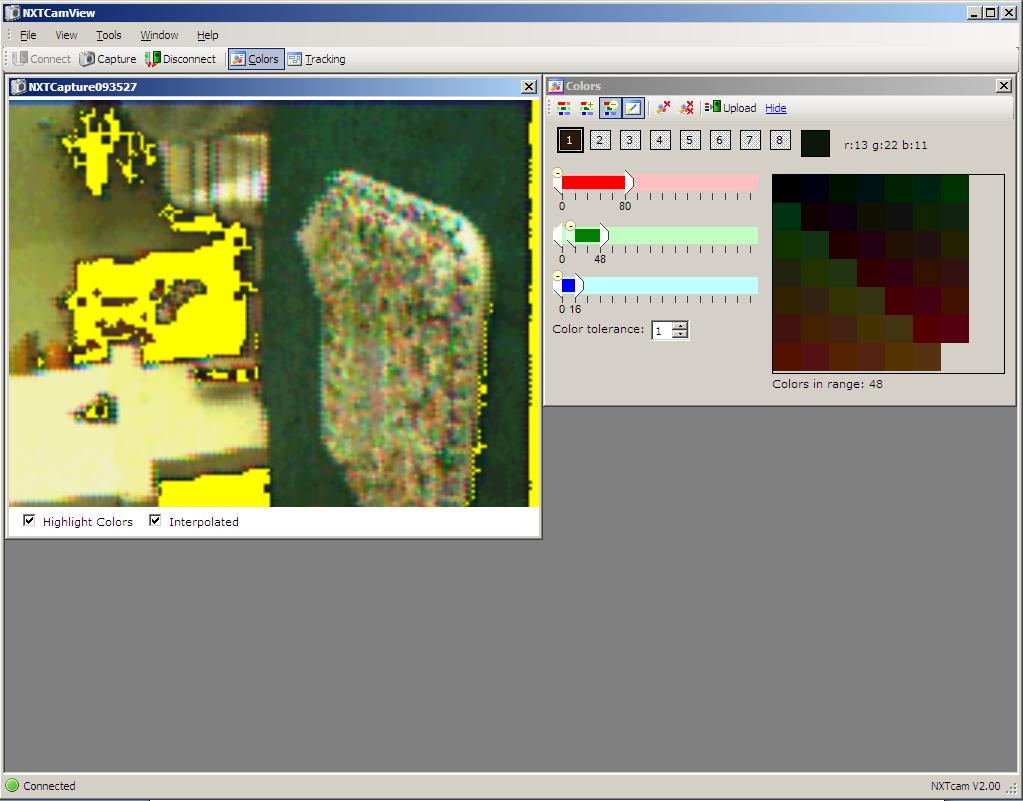

- Click on an object in the image captured that is the colour you are looking for. This automatically positions the cursors for the three colours in the colours window and highlights objects detected by these colours in flashing yellow.

- Adjust the red, green and blue colours in the colour window by moving the corresponding cursors to set the lower and upper limits for each of the three colours. You will notice that when you change the colour settings, an area of varying size is highlighted in the capture window. The software is telling you which areas of the photo your choice of colours corresponds to. When the object you wish to track is completely highlighted, this means that your colour settings are correct.

- You can determine up to eight colours in this way by clicking on boxes 1 to 8 in the colours window and by configuring each of them as in the previous step.

- Upload the settings onto the NXTCam embedded processor by clicking on the “Upload” button in the colours window.

- The last step is optional. It involves testing your configuration by performing a test tracking operation using NXTCamView. We will describe this step in detail in the next paragraph.

Demonstration videos are available in Flash format at http://nxtcamview.sourceforge.net/DemoScreenCam.htm

Color tracking

“Tracking” is the ability to extract the location of a specific colour in an image. This amounts to putting a frame around any patches of a colour that can be found in an image. The CMUcam does this automatically by returning the position (frame) of each of the blobs identified (maximum 8 blobs per image).

There are several image processing algorithms for performing tracking operations. The CMUcam 2 proposes a simple algorithm that only requires a single processing operation for each frame captured. The algorithm is as follows: the CMUcam starts at the top left of the frame captured and examines each pixel one by one, line by line. If the value of the pixel falls within the previously established colour blob, it is marked as tracked. The pixel is also examined to find out if it is the pixel that is the highest, lowest, furthest to the left or furthest to the right of the current tracked blob. If this is the case, the tracking blob is extended. The algorithm also stores the sum of the horizontal coordinates and the sum of the vertical coordinates of the pixels constituting the tracked blob. These two sums can then be used to determine where the centre of gravity of the tracked blob is by dividing these two numbers by the number of tracked pixels. This centre of gravity is termed the centroid.

Let’s continue the process at step 6 of the previous paragraph. A tracking window is available in NXTCamView (if it is not visible, click on the tracking button). This window allows you to perform a test tracking operation while your NXTCam is still connected to the PC. If you have correctly downloaded the colours onto the NXTCam during the previous step, you should see them appear in the table at the bottom right of the tracking window. Click on the “Start” button and the NXTCam will start to capture images. You will see a graphical representation of the measurements made by the NXTCam appear in real time in the white table that covers most of the tracking window.

NXTCamView shows objects in the form of boxes of the corresponding colour together with their coordinates.

The coordinates displayed for each of the boxes are:

- C = number of the colour if you have uploaded more than one colour

- X = abscissa of the point at the top left of the box shown (the origin of the plan is situated at the top left of the window)

- Y = ordinate of the same point

- W = width of the box

- H = height of the box

- A = area covered by the box (the blob)

So there we are. You now have an idea of how the CMUcam works. It supplies the coordinates of configured blobs of colour in its field of vision. You can now integrate the NXTCam into your robot and use it like any other sensor.

Installing it on the robot

Let’s continue our introduction to the CMUcam by installing our NXTCam on our Lego Mindstorms NXT robot. First of all, attach the camera to the robot and connect the camera to the Lego Mindstorms NXT intelligent brick using an RJ12 connection cable (these are the cables supplied with the kit and which look like telephone cables).

This connection provides both the power to the NXTCam and channels the data to the microprocessor in the Lego Mindstorms NXT intelligent brick (when the power is on, a yellow diode lights up on the back of the NXTCam).

Programming using NXT-G: Importing the programming

The first step consists in acquiring the programming brick that will allow you to manipulate the camera.

The source code for the brick can be found on the Mindsensors site at http://www.mindsensors.com/index.php?module=documents&JAS_DocumentManager_op=viewDocument&JAS_Document_id=46

The installation procedure is a very simple, one-off process:

- Download the zip file (compressed file) and place it somewhere on your PC (it doesn’t matter where)

- Unzip the zip file

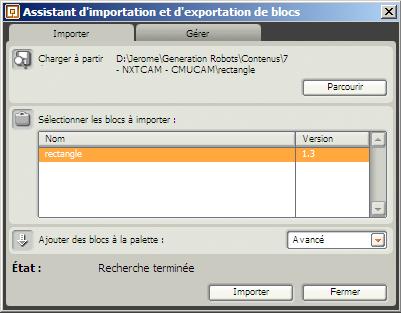

- In the NXT-G environment, click on “Assistant d’importation et d’exportation de blocs” (Block import and export wizard) in the “Outils” (Tools) menu

- In the window that opens, click the “Parcourir” (Browse) button and select the file you created in step 2

- Select the block to be imported in the table vChoose which palette you wish to add your block to using the drop-down list

- Click on “Import”

Example of NXTCam programming

Now that all the conditions are ready for programming, we will create a very simple program.

Good examples of more complex programs can be obtained at http://nxtcamview.wiki.sourceforge.net/Projects

The following program is very simple:

This program allows you to display the number of tracked objects on the screen of the Lego Mindstorms NXT intelligent brick.

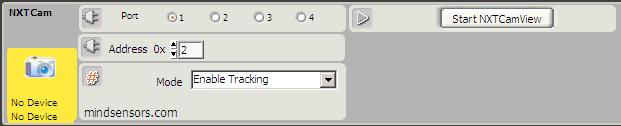

The NXTCam block is called twice:

- First with the “Enable Tracking” function, which provides the power supply to the NXTCam through the intelligent brick

- Then with the “Get First Object” function, which returns the first object detected by the NXTCam corresponding to one of the colours configured in the colour map

There are other NXTCam functions that allow you to classify objects by size or by colour, or that allow you to recover the nth object in the list (remember that the NXTCam only tracks eight at a time in any case). Don’t hesitate to consult the online help describing the block.

One small comment: sometimes the NXTCam brick displays “No device”. Don’t worry – simply downloading the NXT-G program onto your robot generally works. Remember to configure the port number for the NXTCam correctly (as for all sensors). You generally don’t need to touch the address setting.

Good practices

Here are some good practices that will allow you to get the best from your CMUcam:

- Avoid choosing colours that overlap in their spectrum, as this will make it harder for the camera;

- In online tracking mode, you are advised to limit the number of colours to 1;

- The CMUcam is intended to operate in an environment lit by fluorescent white light (bulbs). If the image is a little red, it means that there is an infrared source that is too strong. It must be reduced or removed. Ocular filters that filter (accept or refuse) infrared light can be ordered from the Mindsensors website. This type of filter is required if you want to operate the CMUcam outdoors, because of the infrared light emitted by the sun; and

- The performance of the CMUcam will be improved if there is strong colour contrast, e.g. a red ball on a white background. In the same way, you will obtain better performances if you are in an environment where the light is uniform. You will notice that the performance of the NXTCam may be limited by these two parameters in a natural environment, but the NXTCam is still an interesting sensor for introducing beginners to the use of visual sensors in robotics.

Generation Robots (http://www.generationrobots.co.uk)

All use and reproduction subject to explicit prior authorization