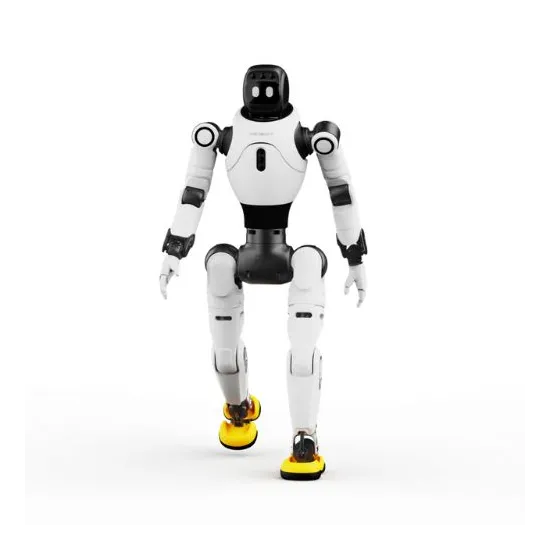

Resources for the Agibot X2 Ultra humanoid robot

FAQ – Agibot X2 Ultra humanoid robot

What is the difference between the X2 and the X2 Ultra?

The Agibot X2 Ultra version notably stands out through the integration of a 3D LiDAR, an RGB-D camera, a 4G/5G module, an Orin NX (157 TOPS) board, more hardware interfaces, and secondary development support. It is the preferred version for advanced projects in navigation, perception, and development.

Can the Agibot X2 Ultra humanoid robot navigate autonomously?

Official resources present autonomous navigation and obstacle avoidance features. However, the manufacturer’s FAQ specifies that some advanced functions depend on an advanced autonomous driving package and must be assessed according to the intended use case.

Can the X2 Ultra automatically return to its charging station?

Yes, but it requires a dedicated charging station.

Which sensors are useful for a perception or navigation project?

The Agibot X2 Ultra includes a 3D LiDAR, an RGB-D camera, several RGB cameras, as well as a touch sensor. This set is relevant for projects combining environmental perception, interaction, and navigation.

What level of human-robot interaction can be expected?

Multimodal interaction based on vision, voice, touch, and facial expressions. The robot is therefore positioned for use cases where presence, expression, and user interaction play an important role.

Is the robot suitable for a software development or integration project?

Yes, secondary development is supported. For a structured project, it is advisable to validate in advance the exact scope of the tools provided: documentation, software interfaces, openness level, technical support, and included options.

What should be checked before purchase?

Before ordering, it is recommended to confirm the options actually included, the level of technical support, the enabled software functions, the network requirements, the possible charging station, and the integration conditions for your target environment.